Thu, 03 Sep 2020 09:44:46

Staff ![]()

412

Elections in the US are imminent, and Microsoft has invented the technology to prevent misinformation at these crucial times. The company has announced a new Deepfake detection tool called Microsoft Video Authenticator which will assist in recognizing synthetic images and videos created by AI on the internet.

For those who don't know, Deepfake technology is used to swap people's faces in videos and make them say things they never said. While the technology has a positive side, Deepfake is notorious for being a source of fake porn videos featuring celebrities and face-swapping videos of politicians saying controversial things.

Related: How To Recognize Deepfakes? This Quiz Can Tell You

Plus, Deepfake technology is getting better as we speak, and it's getting harder for even experts to detect. That's where we thought to put AI against AI in an attempt to find Deepfake.

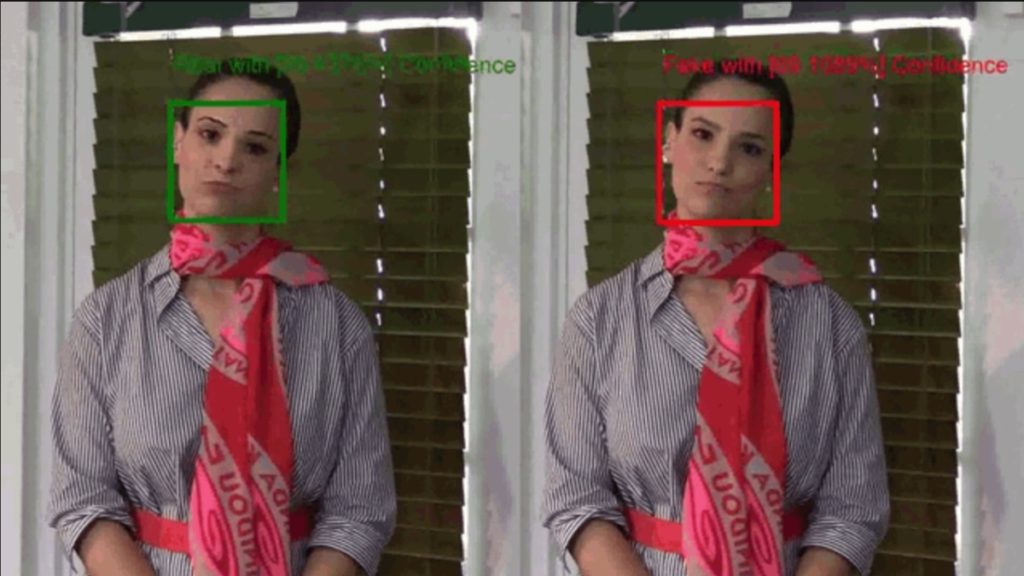

Microsoft Video Authenticator analyzes a specific image or video and generates what Microsoft calls a trust score — a percentage score to find out whether media content was artificially manipulated.

Also Read:

- The US will pay up to $10 million if you can identify foreign election hackers

- Facebook removes troll network posing as Black Trump supporters

The tool can analyze video and provide a confidence score for each frame in real-time. Microsoft Research developed it in collaboration with Microsoft's Responsible AI team and the AETHER committee.

Microsoft said in a blog post that the tool “works by detecting the blending boundary of the deepfake and subtle fading or greyscale elements that might not be detectable by the human eye.”

Redmond has also announced a tool that integrates with Microsoft Azure and allows content publishers to insert digital hashes and certificates into their content. This data can then be read by the browser extension (reader) to verify the authenticity of the content. The BBC recently announced the implementation of this tool in the form of Project Origin.

Keywords: deepfake detection, deepfake detector, deepfake microsoft, deepfake detection software

Also Read:

Prev Post:

Next Post: